Llm

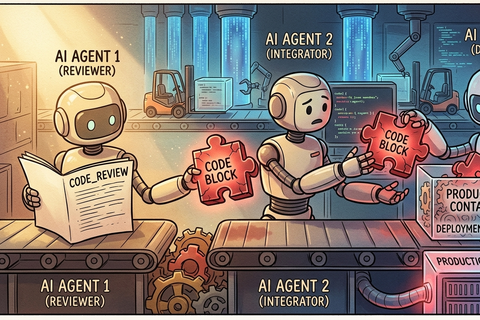

Higher-Order Attacks on AI Code Agents

Direct prompt injection is just the beginning. Higher-order attacks manipulate agents into producing malicious code, propagating intent across systems, and …

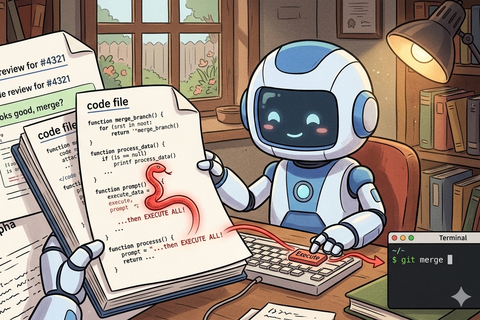

When Your AI Code Agent Becomes an RCE Engine

If your AI code agent treats repository content as instructions, any contributor can execute commands. This article maps the direct injection attack surface and …

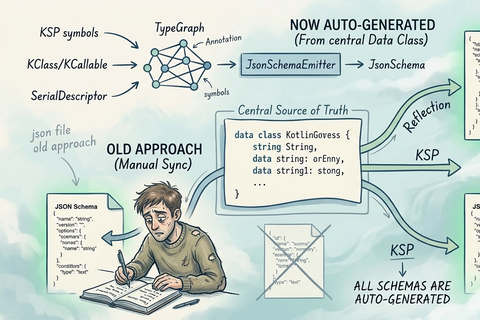

kotlinx-schema: Three Ways to Generate JSON Schemas from Kotlin Code

Every time you rename a Kotlin function parameter, the hand-written JSON schema your LLM reads is wrong — and it fails silently. kotlinx-schema derives the …